Process safety

Authors: Anne Disabato, [2014] Tim Hanrahan, [2014] Brian Merkle, [2014] and Spencer Saldana [2015]

Stewards: David Chen, Jian Gong, and Fengqi You

Date Presented: January 19, 2014

Introduction

The safe design and operation of facilities is of paramount importance to every company that is involved in the manufacture of fuels, chemicals, and pharmaceuticals. Process safety focuses on the prevention of dangerous situations, such as fires, explosions, and the release of chemicals.

The American Institute of Chemical Engineers emphasizes a culture of process safety through four pillars[1]:

1. Commitment to Process Safety: a workforce that is actively involved and an organization that fully supports process safety as a core value will tend to do the right things in the right way at the right time – even when no one else is looking

2. Understanding Hazard and Risk: the foundation of a risk-based approach which will allow an organization to use this information to allocate limited resources in the most effective manner

3. Manage Risk: the ongoing execution of risk based process safety tasks. Risk management can help a company to better deal with the resultant risks and sustain long-term accident free and profitable operations

4. Learn from Experience: Metrics provide direct feedback on the workings of RBPS systems, and leading indicators provide early warning signals of ineffective process safety results. Organizations must use their mistakes and those of others as motivation for action and view as opportunities for improvement.

For the prevention and management of specific safety hazards, such as fires, explosions, or the release of toxic chemicals, please see Process Hazards.

Safety Organizations and Terminology

Organizations

OSHA

The Occupational Safety and Health Administration (OSHA) is a federal agency that focuses on the enforcement of safety and health legislation.

EPA

The Environmental Protection Agency (EPA) is a U.S. agency whose purpose is the protection of the health of both humans and the environment through the writing and enforcement of regulatory laws.

DOT

The Department of Transportation (DOT) oversees federal highway, air, and maritime transportation, and can be involved in the safe transport of chemicals.

DOE

The Department of Energy (DOE) is a governmental department tasked with the advancement of energy technology in the United States.

Terminology

HS&E

Health, Safety, and Environmental - This term refers to all health, safety, and environmental concerns that arise at each stage of the design process. Companies are required to analyze each part of the process from an HS&E perspective to create a safe and healthy work environment.

MSDS

Material Safety and Data Sheet - Every chemical has an MSDS which contains all the information regarding safe handling and how to deal with spills or other accidents involving the substance. Relevant information includes how to identify the substance, hazard information, and how to handle spills, fires, and exposure, among other things.

FMEA

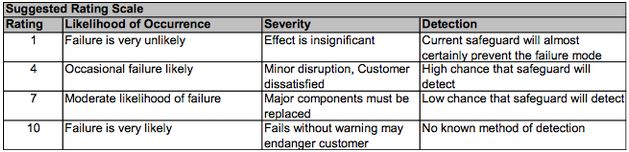

Failure Mode and Effects Analysis - FMEA is an early stage approach to identifying critical technical risks using a semi-quantitative procedure. The analysis encompasses safety, environmental, and operational feasibility. When performing FMEA, engineers look to see places in a potential design that could fail, and then quantify how likely that failure is, how severe the results would be, and then offer potential solutions to minimize the risk. A step-by-step guide to performing FMEA is shown below[2]:

- Brainstorm for failure modes

- For each FM, rate severity of impact (SEV, 1 - 10).

- For each FM, brainstorm for possible causes (there may be multiple).

- For each cause, rate likelihood of occurring (OCC, 1 - 10).

- Rate the probability that the systems currently in place will detect and prevent the problem before it has an impact (DET, 10 - 1). Do not assume that something that will be added to the design later will take care of the problem.

- Overall Risk Probability Number RPN = SEV x OCC x DET. Most practitioners use the 1,4,7,10 scale below to increase granularity. Note that the DET scale is inverse to SEV and OCC.

Figure 1: Suggested scale to be used for quantifying risk and detection of failures in FMEA. Taken from ChE 351 Slides.

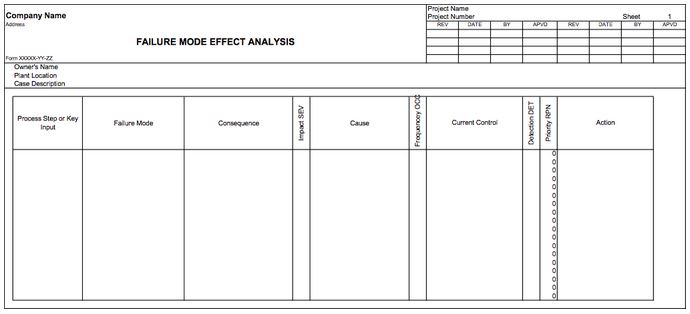

The sum of all information collected is implemented into a spreadsheet like the one shown below:

Figure 2: Example spreadsheet used to organize FMEA data.

HAZOP

Hazard and Operability Study - For more information regarding HAZOP, please refer to Process Hazards.

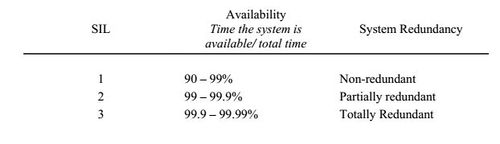

SIL

Safety Integrity Levels - The SIL is the relative level of risk-reduction provided by a safety function, or to specify a target level of risk reduction. A SIL is determined based on a number of qualitative factors such as development process and safety life cycle management. Several methods are used such as risk matrices, risk graphs, layers of protective analysis (LOPA). Three levels of safety integrity are assigned depending on the “availability” of the safety instrumented system (SIS), as shown below[4]:

Figure 3: Table of safety integrity levels based on availability of system. Taken from [4].

Redundant system means instrumentation is duplicated; higher level of redundancy of trip systems give higher SIL. The required SIL should be determined during a process hazard analysis and depends on risk of operator exposure and injury.

1 Instrument

- if it signals, plant goes down

- Probability of incident = probability of instrument failure

- Probability of spurious trip = false positive rate of one instrument

2 Instruments

- 1 out of 2 voting (1oo2): one instrument signals, plant goes down

- Probability of incident reduced by duplication

- Probability of spurious trip doubled

- 2oo2 voting

- Probability of incident worse than single instrument (twice likelihood that system is down)

- Probability of spurious trip reduced

3 Instruments

- 2oo3 voting

- Best overall trade-off between reducing incident rate and spurious trip rate

- One malfunctioning instrument does not cause trip or prevent detection of real incident

Safe Design

Inherently Safe Design

Inherently safe design of a particular process can be achieved by adhering to the following six strategies put forth by Turton et al[3]:

1. Substitution: Avoid using or producing hazardous materials on the plant site. If the hazardous material is an intermediate product, for example, alternate chemical reaction pathways might be used. In other words, the most inherently safe strategy is to avoid the use of hazardous materials.

2. Intensification: Attempt to use less of the hazardous materials. In terms of a hazardous intermediate, the two processes could be more closely coupled, reducing or eliminating the amount of intermediate produced. The inventories of hazardous feeds or products can be reduced by enhanced scheduling techniques such as just-in-time (JIT) manufacturing.

3. Attenuation: Reducing, or attenuating, the hazards of materials can often be affected by lowering the temperature or adding stabilizing additives. By using materials under less hazardous conditions, the potential consequences of a leak can be reduced.

4. Containment: If the hazardous materials cannot be eliminated, they at least should be stored in vessels with mechanical integrity beyond any reasonably expected temperature or pressure excursion. This is an old but effective strategy to avoid leaks. However, it is not as inherently safe as substitution, intensification, or attenuation.

5. Control: If a leak of hazardous material does occur, there should be safety systems that reduce the effects. For example, chemical facilities often have emergency isolation of the site from the normal storm sewers, and large tanks for flammable liquids are surrounded by dikes that prevent any leaks from spreading to to other areas of the plant. Scrubbing systems and relief systems in general are in this category. They are essential, because they allow controlled, safe release of hazardous materials, rather than an uncontrolled release from a vessel rupture.

6. Survival: If leaks of hazardous materials do occur and they are not contained or controlled, the personnel (and the equipment) must be protected. This lowest level of the hierarchy includes fire fighting, gas masks, and so on. Although essential to the total safety of the plant, the greater the reliance on survival of leaks rather than elimination of leaks, the less inherently safe the facility.

Assessing Preliminary Design

While pilot plants are necessary to design effective plant equipment, there are some dangers associated with the industrial scale of chemical plants. Scaling up without accurate literature and experimental data can be very dangerous.

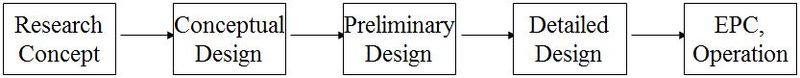

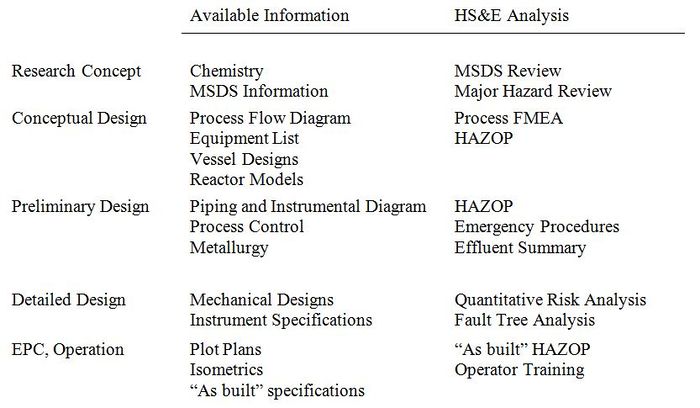

In every step of process design, the Health, Safety, and Environmental (HS&E) analysis must be carried out with the available technical information[5].

Figure 4: General steps in the design process and analysis to be carried out at each stage. Taken from [5].

Economic Cost of Safety

Price of Safety installations and fire protection systems range from .5 to 1.6 % of fixed-capital investment of a plant; but expenditures are often much higher than this and it is difficult to estimate these expenditures for a given plant

In addition, designers also examine the economic impact of safety and maintenance issues. For instance, they may determine that the plant reactor configuration can be improved, and with improved operator training facilities, it can run with improved safety. These hidden costs must be considered when determining the economic feasibility of operation[5].

Other Process Safety Considerations

The safety and well-being of the consumers using the eventual product should be considered in process safety. The risks involved in using a product should be clearly communicated to the consumer by industrial leaders.

Human Error is another safety risk that is difficult to quantify. The intervention of well-trained operators is a vital layer in process safety[6].

Case Study: Bhopal Disaster

On December 3, 1984 at the India Limited Pesticide Plant owned by Union Carbide in Bhopal, India, water entered a storage vessel containing over 80,000 lbs. of methyl isocyanate (MIC), a chemical intermediate in the pesticide synthesis process. This reaction caused a rapid increase in temperature accompanied by boiling, which caused toxic MIC vapors to escape from the tank. In addition, the MIC-water reaction produced methylamine and carbon dioxide gases among other toxic products which also contributed to the pressure increase [7]. These vapors passed into a scrubber and flare system that were not working at the time due to inadequate maintenance and safety practices. As a result of this accident, approximately 25 tons of MIC vapor were released, killing over 3,800 immediately and injuring roughly 20,000 in the surrounding area.

As a result of the incident, Union Carbide was forced to pay $470 million, as well as fund a hospital in Bhopal that was used specifically to treat victims of the disaster. Cleanup of the plant site and other legal action are still being determined to this day. Bhopal sparked a worldwide discussion on chemical process safety, and caused Congress to create the U.S. Chemical Safety Board (CSB). The CSB has since cited the following reasons as causes for the disaster:

- No process hazard analysis

- Poorly maintained equipment and safety system

- Lack of emergency response planning

- Inadequate training for operators

The CSB has pushed chemical safety reform since its conception, urging the chemical industry to produce inherently safer designs, use better quality equipment, and develop more thorough risk management plans. A major criticism of the process was its lack of inherent safe design. Because MIC was an intermediate, there was no reason to keep large quantities in storage. A modern design would use the intermediate as it is made [8].

Although chemical process safety has come a long way since 1984, industrial chemical giants still battle problems similar to Bhopal until this day. In 2008, a disaster similar to the one in Bhopal could have occurred in a plant originally designed by Union Carbide located in Institute, West Virginia after a runaway reaction caused a pressure buildup in a waste treatment vessel. The vessel exploded, killing two plant workers. Fortunately, the explosion missed a large MIC storage vessel which could have been hit by shrapnel and released tons of MIC [9]. In 2013, an ammonium nitrate explosion killed 15 and seriously injured 200 in West Texas in a blast radius similar to the one experienced in Bhopal [10].

Case Study: Deepwater Horizon Explosion and Oil Spill

On April 20, 2010 on the Deepwater Horizon offshore drilling rig located in the Macondo Prospect, multiple explosions killed 11 workers and seriously injured 17. The rig burned for two days before sinking into the Gulf of Mexico. Key safety failures caused the well to spew 5 million barrels of oil into the Gulf of Mexico over the next 87 days making the incident the largest offshore oil spill in U.S. history. Finally, the well was sealed by a “static kill,” the injection of heavy fluids and cement, at the leak point 5,000 feet below the surface.

The key safety failure identified by the CSB was the blowout preventer (BOP) failure. This device that is meant to prevent the filling of annular space between the borehole and the well casing is both electrically and hydraulically powered. It is connected to a rig by a large diameter pipe called a riser. The system contains multiple pipe rams and annular preventers designed to prevent annular space buildup.

On the first night of the incident, a “kick” occurred and a mixture of oil, water, and gases began to build up in the wall and climb up the shaft. Drilling mud was injected to prevent kicks by creating a barrier. An upper annular preventer was also engaged when the buildup was discovered, but it failed. A pipe ram was activated and succeeded. However, an immense pressure buildup caused the drill pipe to buckle so it was forced off center. This buckling was later explained as a result of effective compression. This phenomenon is caused by microscopic irregularities and bends in the pipe material resulting in a higher surface area on one side of the pipe. Because the pipe was off-center, the final failsafe, the Automatic Mode Function (AMF) or deadman could not effectively shear the pipe and seal the well. This redundant control system comprised of a yellow pod and blue pod work independently to seal the well in the event of catastrophic failure when communications, electric power, and hydraulic pressure connections are cut. Both the yellow and blue pods contained 9 volt and 27 volt batteries which power solenoid valves. Unfortunately, the blue pod was miswired, so its 27 volt power supply was drained when it was to cause the blind shear blades to cut the pipe. Fortunately, a 9 volt battery in the yellow pod was also miswired which caused the blind shear ram to be engaged. However, this only partially sealed the well because of the pipe buckling. The flammable mixture erupted onto the surface of the platform and found an ignition source triggering a massive explosion. The spill was temporarily contained by a cap, and relief wells were eventually used to seal the well months later [11].

The White House Office of Energy and Climate Change Policy called the Deepwater Horizon oil spill the “worst environmental disaster the US has faced [12]. Over 8,000 species were estimated to be affected by the spill due to the toxicity of petroleum released, oxygen depletion, and the large quantities of Corexit, an oil dispersant used in an untested manner that is toxic to marine life [13, 14, 15].

BP, Transocean, and Halliburton were the major entities implicated in this tragedy. Investigations after the incident show that essential safety documentation including risk management and emergency procedure information were missing. Accusations were mainly aimed at BP with charges of recklessness and gross negligence [16]. In January 2013, Transocean was ordered to pay $1.4 billion for US Clean Water Act violations. BP was ordered to pay $2.4 billion, but additional penalties could reach $20 billion [17].

Case Study: Texas City Refinery Explosion

On March 23, 2005 at a BP refinery in Texas City, Texas a hydrocarbon vapor cloud ignited, killing 15 workers and seriously injuring 170 others. Over the course an 11 hour period, a combination of control failures, mismanagement, and worker fatigue resulted in the buildup and release of extremely hot, combustible vapor. The key process unit in this disaster was an isomerization unit, located next to wooden trailers for workers servicing an ultracracker unit.

In the early morning on March 23rd, operators initiated startup and pumped raffinate (liquid hydrocarbons) into a raffinate splitter tower used to separate gasoline components. A liquid level indicator and multiple high level alarms monitored the tower liquid level. The level indicator could only measure up to 9 feet of liquid, and the written process called for a liquid level of about 6.5 feet. However, operators routinely filled the tower over 9 feet to minimize fluctuations and to prevent damage to a furnace. Hours later, the first high level alarm was activated and the liquid level rose, but a second alarm higher up the tower failed to trigger. The feed was halted when the liquid had risen to a level of about 13 feet, operators had no way of knowing the exact height. The lead operator relayed the startup activities to another operator and left the facility an hour before his shift ended. The morning operator arrived at 6 am to start his thirtieth consecutive day working a 12-hour shift and read a logbook that read, “Isom* Brought in some raff to unit, to pack raff with.” The day shift operator arrived an hour late, so he could not be briefed by the night shift supervisor. Recirculation then commenced in the tower, and more liquid was added to the tower. Additionally, conflicting instructions caused a liquid level regulating valve to remain closed for several hours, so liquid could not leave the tower. The furnace was then lit, and the supervisor left to attend a family medical emergency.

At noon, the liquid level had risen to 98 feet, but the improperly calibrated liquid level indicator read 8.4 feet. At 12:41 pm, a high pressure alarm caused workers to manually open a chain valve to relieve pressure by using the units pressure relief system to vent vapor into the atmosphere using an obsolete blowdown drum. Heat was also reduced in the furnace to reduce pressure. When operators became concerned about outflow rate, the liquid level regulating valve was opened to release liquid from the tower to storage. This caused the liquid in the tower to begin to boil and spill into the overhead vapor line exerting extreme pressures on the pressure relief system. At 1:14 pm, the three relief valves opened sending the liquid to the blowdown drum which overflowed into a municipal sewer setting off alarms, but a key level indicator in the blowdown drum failed. Flammable liquid erupted from the blowdown drum, formed a massive vapor cloud, and found an ignition source from a nearby idling pickup truck. The colossal blast ignited fires throughout the refinery and over half the workers in the wooden trailers adjacent to the unit were killed immediately [18].

Investigations after the incident cited multiple failures to implement safety recommendations at the Texas City Refinery. Among these, the blowdown drum was to be replaced by a modern flare to burn off hydrocarbons. However, BP’s budget cuts prevented its replacement. The training and treatment of workers was also called into question, as fatigue, poor communication, and inadequate documentation likely contributed to the disaster. Decisions like the one to operate an unsafe liquid level in order to prevent furnace damage also demonstrate the company’s fixation on the bottom line. BP was eventually fined $21 million by OSHA [19, 20].

References

- American Institute of Chemical Engineers. http://www.aiche.org/ccps

- Northwestern University. Chemical Engineering 351 Lecture Slides.

- R.T. Turton, R.C. Bailie, W.B. Whiting, J.A. Shaeiwitz. Analysis, Synthesis, and Design of Chemical Processes. Prentice Hall: Upper Saddle River, 2003.

- G.P. Towler, R. Sinnott. Chemical Engineering Design: Principles, Practice and Economics of Plant and Process Design. Elsevier, 2012.

- L.T. Biegler, I.E. Grossmann, A.W. Westerberg. Systematic Methods of Chemical Process Design. Prentice-Hall: Upper Saddle River, 1997.

- M.S. Peters, K.D. Timmerhaus. Plant Design and Economics for Chemical Engineers, 5th Ed. McGraw-Hill: New York, 2003.

- Union Carbide Corporation "Methyl Isocyanate" Product Information Publication, F-41443, November 1967.

- Eckerman, I. The Bhopal Saga: Causes and Consequences of the World’s Largest Industrial Disaster. Universities Press Private Limited, 2005.

- Blanc, P. Bhopal, 1984 – West Virginia near-miss, 2008. Psychology Today, December 2009. https://www.psychologytoday.com/blog/household-hazards/200912/bhopal-1984-west-virginia-near-miss-2008.

- Reflections on Bhopal After Thirty Years Video, U.S.Chemical Safety Board, December 2014.

- USCSB Deepwater Horizon Video, U.S.Chemical Safety Board, June 2014.

- "Gulf of Mexico oil leak 'worst US environment disaster'". BBC News. 30 May 2010.

- Biello, David (9 June 2010). "The BP Spill's Growing Toll On the Sea Life of the Gulf". Yale Environment 360. Yale School of Forestry & Environmental Studies. Retrieved 2010-06-14.

- Butler, J. Steven (3 March 2011). "BP Macondo Well Incident. U.S. Gulf of Mexico. Pollution Containment and Remediation Efforts" (PDF). Lillehammer Energy Claims Conference. BDO Consulting. Retrieved 17 February 2013.

- Froomkin, Dan (29 July 2010). "Scientists Find Evidence That Oil And Dispersant Mix Is Making Its Way Into The Foodchain". Huffington Post.

- “DOJ accuses BP of ‘gross negligence’ in Gulf oil spill” CNN Money, September 2012.

- "Transocean Agrees to Plead Guilty to Environmental Crime and Enter Civil Settlement to Resolve U.S. Clean Water Act Penalty Claims from Deepwater Horizon Incident". Department of Justice Office of Public Affairs. January 3, 2013.

- Investigation Report: BP Refinery Explosion and Fire, U.S.Chemical Safety Board, 2008.

- Lyall, Sarah. "In BP’s Record, a History of Boldness and Costly Blunders." New York Times, July 13, 2010.

- Investigation Report: BP Refinery Explosion and Fire, U.S.Chemical Safety Board, 2008.